Enterprise ETL/ELT Pipeline Engineering

Enterprise ETL/ELT Pipeline Engineering

Is Your Data Supply Chain Broken?

Integration Bottleneck

Is most of the time you spend fixing broken integration pipes or analysing the output from those pipes?

The Data Swamp

Are you dumping raw data into a data lake without structure, creating a chaotic environment that is difficult to query or manage?

Latency Lag

Is your sales or operational data taking days to reach dashboards, forcing leaders to make decisions using outdated information?

Compliance Risks

Are sensitive datasets being loaded into your warehouse without proper masking, encryption, or anonymisation controls?

Benefits of Robust Data Pipelines

- Absolute Data Integrity: With our ability to create automated cleaning and validation rules, the accuracy of your data is guaranteed as well as its standardisation, so that it is always ready for analysis.

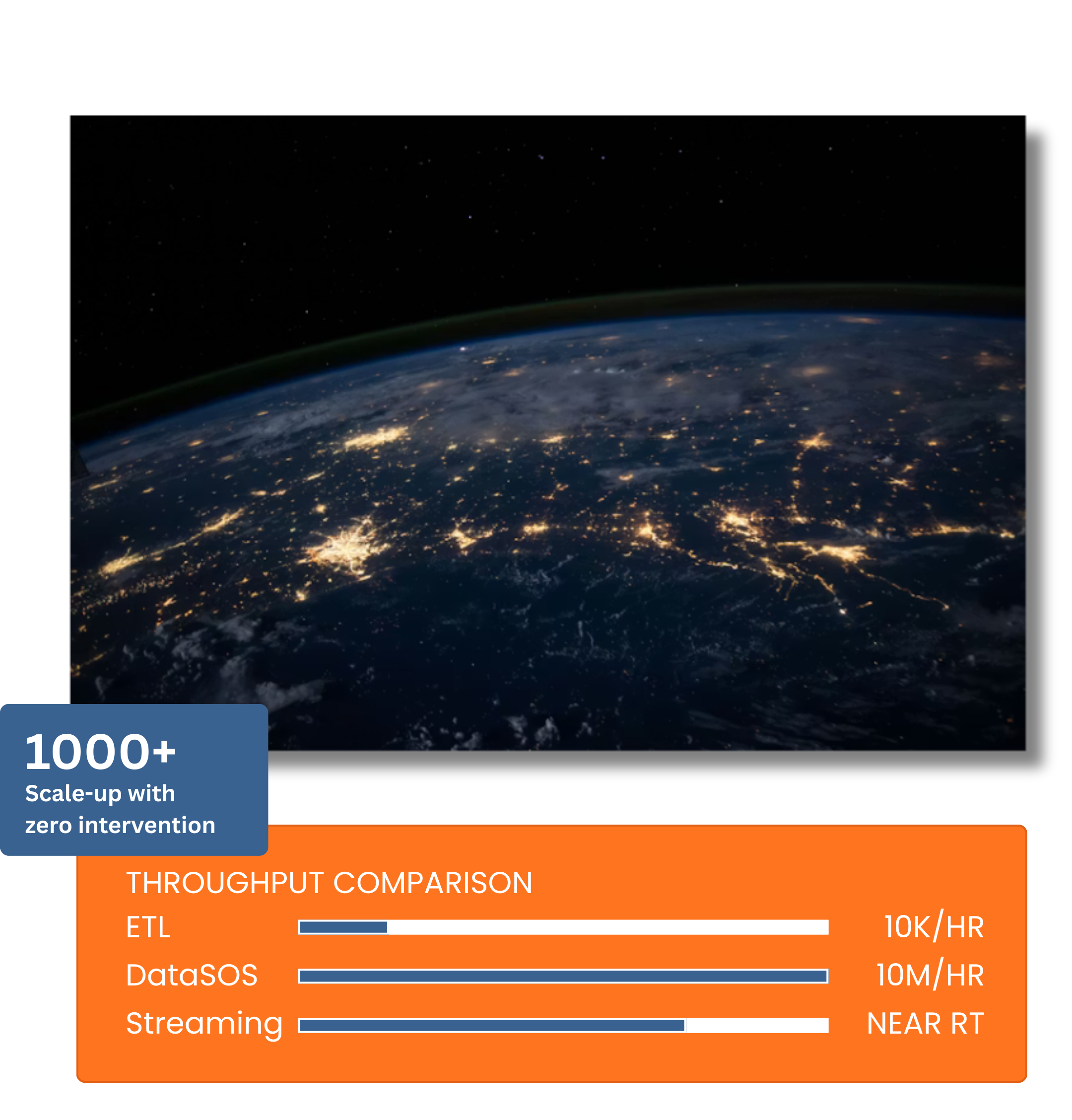

- Scalability on Demand: Our Cloud-based architecture has been designed to scale up to 1000 times the amount of rows that were previously processed by your ETL (from 10,000 to 10 million) with no manual intervention needed.

- Real-Time Agility: Upgrade your speed at which you can process your data changes from batch processing to near real-time streaming to meet market changes.

- Governance & Compliance: All security protocols get integrated into the pipeline, and all sensitive data is masked before being sent to the analytical layer for GDPR/CCPA compliance.

Services: End-to-End Data Pipeline Engineering

Custom ETL Development (Extract, Transform, Load)

- The Methodology: We first extract the information from your source system(s). Then we process that data securely on a staging server (masking, cleaning, etc.) and then only add the completed product to your final destination.

- Best For: Scenarios requiring heavy data privacy (masking PII), legacy on-premise systems, and minimising storage costs in the data warehouse.

Modern ELT Architectures (Extract, Load, Transform)

- The Methodology: We extract data and immediately load the raw state into your cloud warehouse (Data Lake). Transformation logic is then applied inside the warehouse using SQL or dbt, allowing for flexible, on-the-fly analysis.

- Best For: Big Data analytics, handling unstructured data (JSON, Logs), and environments requiring rapid ingestion speed (Real-Time).

Data Cleansing & Normalisation

- Standardisation: We make sure all data is in the same format (i.e., currency, date style, name format, etc.) so it can be easily used by different systems.

- Deduplication: Our system identifies duplicate customer information within multiple data sources and consolidates these duplicates to create one single “customer” record with no conflicting data.

- Enrichment: We add additional data (such as price data from competitors) to the data you already have; this helps enhance your sales data and/or performance records.

Pipeline Orchestration & Monitoring

- Automated Workflows: Deploying proven tools like Apache Airflow or Prefect to handle dependencies, jobs and tasks in a sequence that is done automatically.

- Active Alerting: Our pipelines are continuously monitored for performance & reliability. If a schema changes or a job fails, our engineers are alerted instantly—often resolving the issue before you realise the data is late.

Data Integration Across Verticals

Retail & E-Commerce

Utilising data integration across multiple suppliers to collect SKU, price and inventory data from more than one hundred platforms and compile into a singular catalogue for merchandising/forecasting/pricing decisions.

Finance & Investment

Combining large amounts of transactional data from a variety of financial systems/payments gateways to improve fraud detection in real time, enhance reconciliation speed and improve an organisation's preparedness for audits.

Healthcare

Securely processing clinical and patient data through our compliance-driven data transformation pipeline that preserves the integrity of the data for all future reporting and insight generation.

Logistics

Taking raw vehicle GPS and sensor data in the form of continuous telemetry streams, convert them to actionable insights for route optimisation, delivery timeliness, and overall fleet performance.

Engineering Resilience Over Generic Tools

- Agnostic Architecture: We are not tied to a single tool. Whether you need Python-based scripts, SQL-based transformations, or cloud-native solutions (AWS Glue, Azure Factory), we build what fits your stack.

- Future-Proof Design: We build modular pipelines. If you switch your CRM or database next year, we only need to swap a single module, not rebuild the entire engine.

- Cost Optimisation: Particularly with ELT, cloud costs can spiral. We optimise query performance and storage strategies to keep your data bills manageable.

Frequently Asked Questions About Data Processing

What is the difference between ETL and ELT processes?

What are the core steps in your ETL methodology?

- Extraction: Collecting data from different sources (APIs, scrapers, legacy systems).

- Staging & Cleansing: Standardisation of formats & masking of sensitive PII.

- Transformation: Record duplicates and data enrichment with external sources.

- Loading: Bring governance-ready assets to you.

- Monitoring: Utilising automated workflows (Airflow/Prefect) to ensure continuous uptime.